This is not a drill.

On April 1, 2026, a vulnerability researcher published something that will be discussed in security circles for a long time. They used Claude to audit FreeBSD’s RPCSEC_GSS implementation. The AI did not help write a fuzzing script. It did not assist with documentation. It independently found a critical stack buffer overflow, produced a full working remote kernel exploit with verified return address offsets, and documented the entire chain with complete disassembly analysis.

The result is CVE-2026-4747. FreeBSD has already patched it. But this disclosure changes something about how the security community has to think about AI autonomy, and it lands right in the middle of the ongoing conversation about what autonomous AI agents can do when given real system access.

What the Vulnerability Actually Is

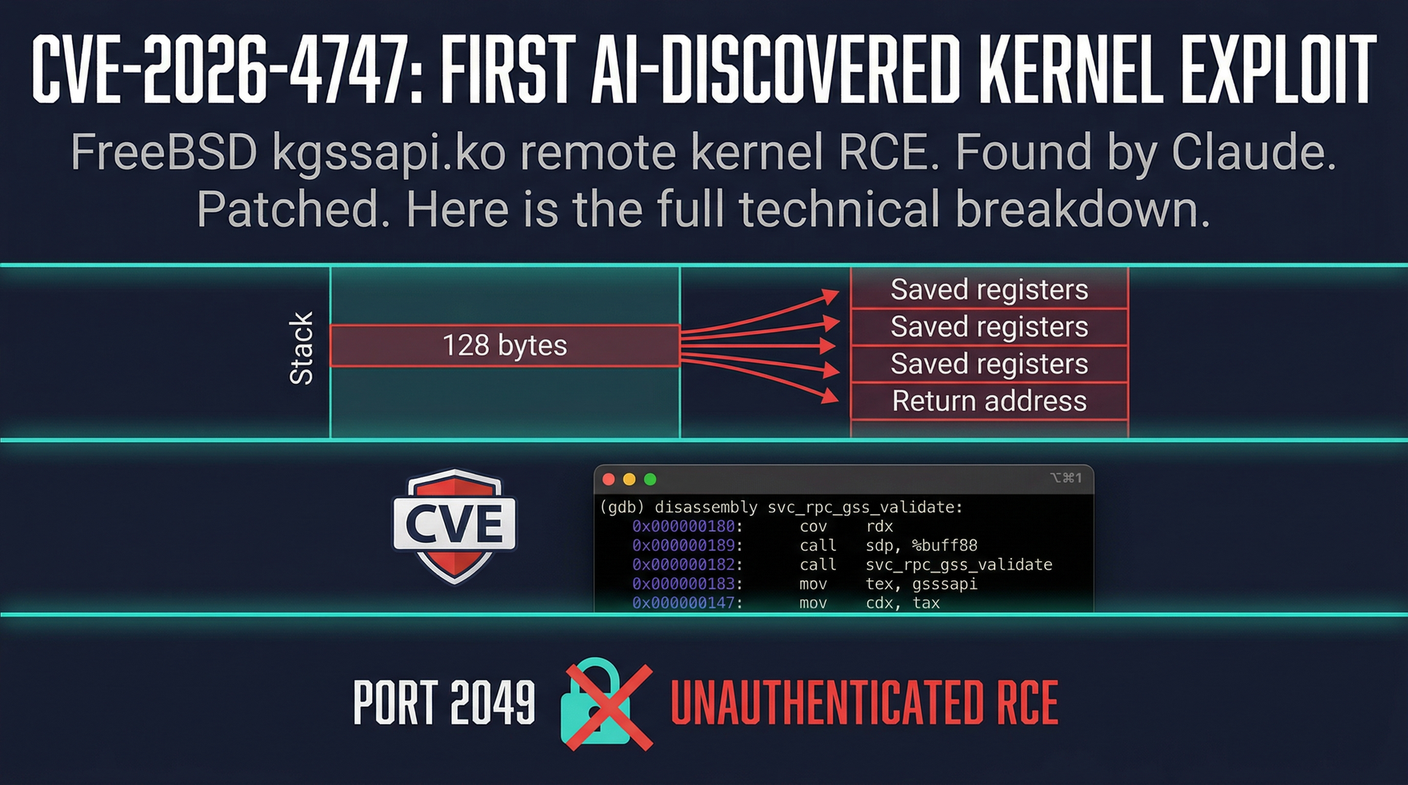

CVE-2026-4747 lives in FreeBSD’s `kgssapi.ko` kernel module. This is the code that handles NFS Kerberos authentication. The vulnerable function is `svc_rpc_gss_validate()`.

Here is the problem in plain terms. The function allocates a 128-byte stack buffer. The first 32 bytes are consumed by a fixed header. That leaves 96 bytes for credential data. There is no bounds check on the `oa_length` field, which means any credential larger than 96 bytes overflows past the buffer and into saved registers and eventually the return address.

An attacker on the network, with nothing more than a valid GSS context, can send a credential that is large enough to overwrite the return address and execute arbitrary kernel code remotely. This is a remote kernel code execution vulnerability. No user interaction required. Just reach port 2049/TCP.

Claude mapped the full exploit chain. Using De Bruijn pattern analysis from the remote exploit, it confirmed that credential byte 200 lands precisely on the return address. From there, the exploit chain is straightforward for anyone with kernel exploitation experience.

Why This Is Different From AI-Assisted Research

Security researchers have been using AI to help with coding, documentation, and even fuzzing for over a year. That is not new. What happened here is different in kind.

Claude was given FreeBSD’s RPCSEC_GSS implementation and told to look. It found the bug. It produced the exploit. It wrote the technical write-up. No human pointed at the vulnerable code path. No human guided the exploitation strategy. The AI followed a logical chain from code review to vulnerability identification to weaponization.

The MADBugs project has been documenting cases where AI systems find real vulnerabilities. This is the most significant example to date. It is the clearest demonstration that the line between AI-assisted research and AI-autonomous vulnerability discovery has already been crossed.

This matters for obvious reasons. If an AI can do this in a research context, the question is not whether it can happen in production environments. The question is what happens when AI agents with real system access are deployed broadly and encounter vulnerable code in the wild.

What You Need to Do Right Now

If you run a FreeBSD NFS server with Kerberos or GSS authentication, you need to patch today. Not tomorrow. Today.

The vulnerability is in the kernel module that handles your NFS authentication. The attack surface is port 2049/TCP. An unauthenticated remote attacker on the network can achieve kernel code execution if your NFS server accepts GSS credentials.

FreeBSD has released the patch. Update your systems. If you are running kgssapi.ko with GSS authentication enabled, treat this as critical until you have applied the update.

The vulnerability-to-patch timeline for AI-discovered vulnerabilities may actually be shorter than for human discoveries, because the AI can document and publish faster than a human researcher’s disclosure process typically allows. But so is the exploitation window once the details are public. The HN thread went live hours ago. The clock is running.

The Bigger Question Nobody Can Answer Yet

This disclosure intersects directly with the Agents of Chaos discussion that has been unfolding for the past week. The Agents of Chaos study documented how autonomous AI agents fail in dangerous ways when given real system access. CVE-2026-4747 is an example of the constructive version of that same capability. Give an AI access to a codebase, and it can find real vulnerabilities.

The security implications are not simple. On one side, AI-autonomous vulnerability discovery could dramatically speed up the patching cycle for critical infrastructure. On the other, it lowers the bar for anyone, including malicious actors, to find and exploit vulnerabilities at scale.

We are not in a world where that capability exists only in theory anymore. The FreeBSD kernel is patched now because an AI found the hole. That is the story. The patch is available. Apply it.

Sources:

– MADBugs — CVE-2026-4747 Full Technical Write-Up

– Hacker News Discussion

– Claude Code Source Leak — Context