The benchmark numbers from Google DeepMind’s Gemma 4 release are hard to process in one read. Read them again. Then look at what Gemma 3 scored just a generation ago. Then go look at what GPT-4.5 posts on the same leaderboard.

Gemma 4 31B sits at the top.

Google dropped the new Gemma family today and immediately climbed to #1 on Hacker News with 977 points. This did not happen slowly. It happened in under six hours. The open model race has a new entry and it is not messing around.

The Numbers That Matter

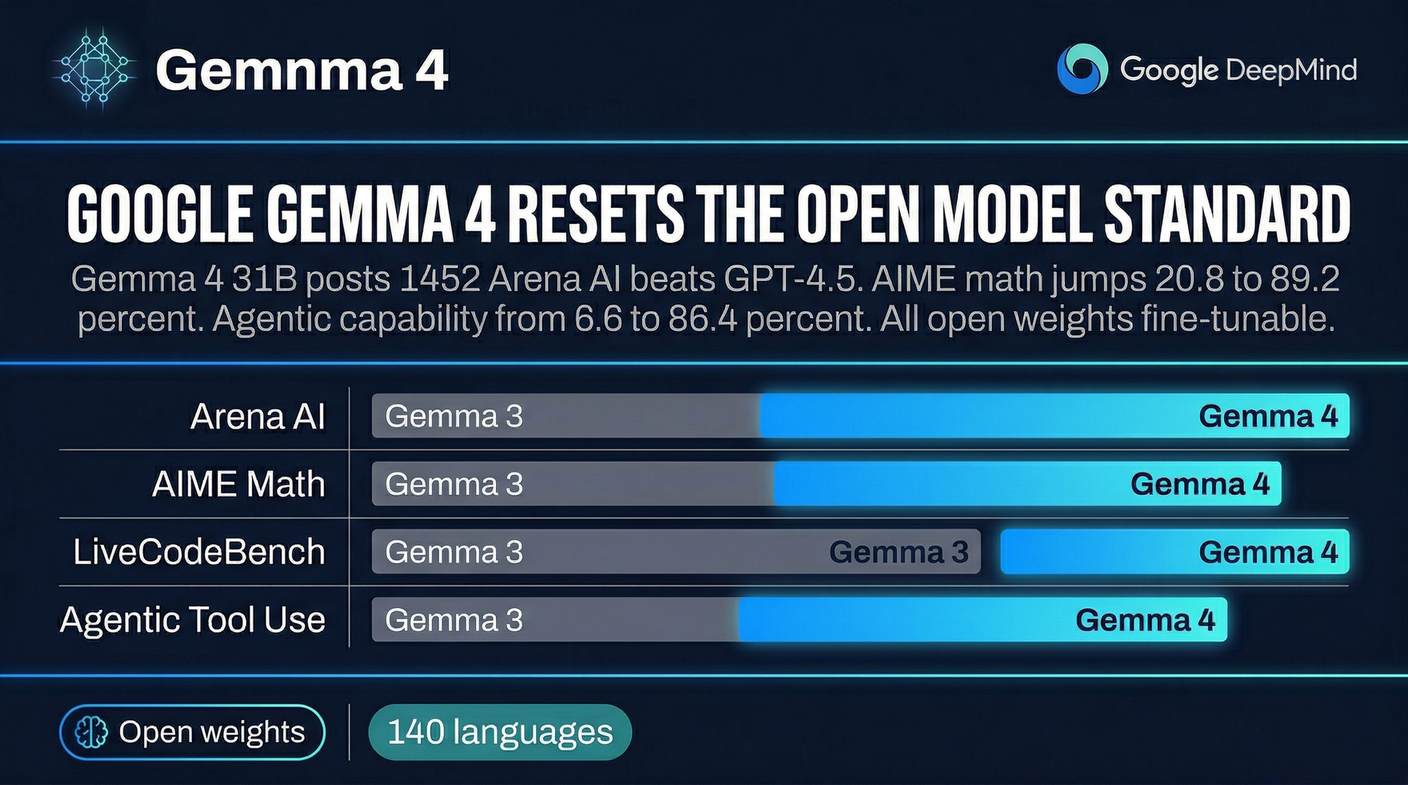

Arena AI is one of the most respected human preference leaderboards in the industry. Gemma 4 31B scores 1452. That beats GPT-4.5. That beats what most people thought required ten times the parameter count to achieve.

AIME 2026 is a mathematics benchmark that has humbled models for years. Gemma 3 posted 20.8 percent. That was respectable. Gemma 4 posts 89.2 percent. That is a fourfold jump in one generation. AIME 2026 is not getting easier. The model just got dramatically better at reasoning through hard math problems.

The agentic capability jump is the one that should catch the attention of anyone building AI tools. On τ2-bench Retail, a benchmark for tool use and workflow execution, Gemma 3 scored 6.6 percent. Gemma 4 scores 86.4 percent. That is not a marginal improvement. That is a categorical transformation of what the model can do when asked to interact with real tools and real workflows.

LiveCodeBench, which tests competitive programming ability, shows 80 percent. Competitive coding was one of the last areas where closed frontier models held a clear advantage. That gap is now narrow.

What Made This Possible

Google did not just train a bigger model. They trained Gemma 4 using research and technology from Gemini 3, their frontier model. That is the key detail. Gemini 3 research flows directly into the open release. The capability gap between what Google keeps behind its API and what it releases openly has collapsed in a meaningful way.

The architecture is also notably efficient. These models are designed to run on personal computers, not just data centers. The E4B and E2B variants specifically target edge deployment. Mobile and IoT use cases that previously required cloud inference can now potentially run locally. That changes the economics for a lot of applications that could not justify the latency and cost of round-tripping to a remote API.

What It Means for Open Model Deployment

If you have been waiting for open-source models to catch the frontier before building with them, the catching has happened. The agentic tool use numbers matter most here. Native function calling at 86.4 percent means you can build reliable workflows around these models without spending months engineering around model limitations.

Multimodal reasoning at 76.9 percent on MMMU Pro opens up applications that need to process audio and visual inputs. Combined with 140-language support, Gemma 4 is a genuinely global model, not just an English-language benchmark special.

The fine-tuning story is straightforward. Standard frameworks work. If you need a specialized variant for your domain, the path from open weights to deployed custom model is well-trodden territory in the community now.

Why This Changes the Calculus

The conversation around open versus closed models has been framed around capability parity for over a year. The implicit assumption was that closed models would stay ahead. That assumption is getting harder to defend.

Gemma 4 31B fits in memory on modern developer hardware. It posts numbers that beat the best closed models on human preference rankings. It comes with native function calling that actually works. The local AI stack that hobbyists and startups have been building toward is now viable for production workloads.

If you have been running open models for the flexibility and cost savings but keeping a closed model API around for the hard cases, Gemma 4 might be the moment to consolidate. The benchmark gap is gone. The price difference is not.

Sources:

– Google DeepMind — Gemma 4 Release

– Hacker News Discussion

– Gemma 4 Model Card