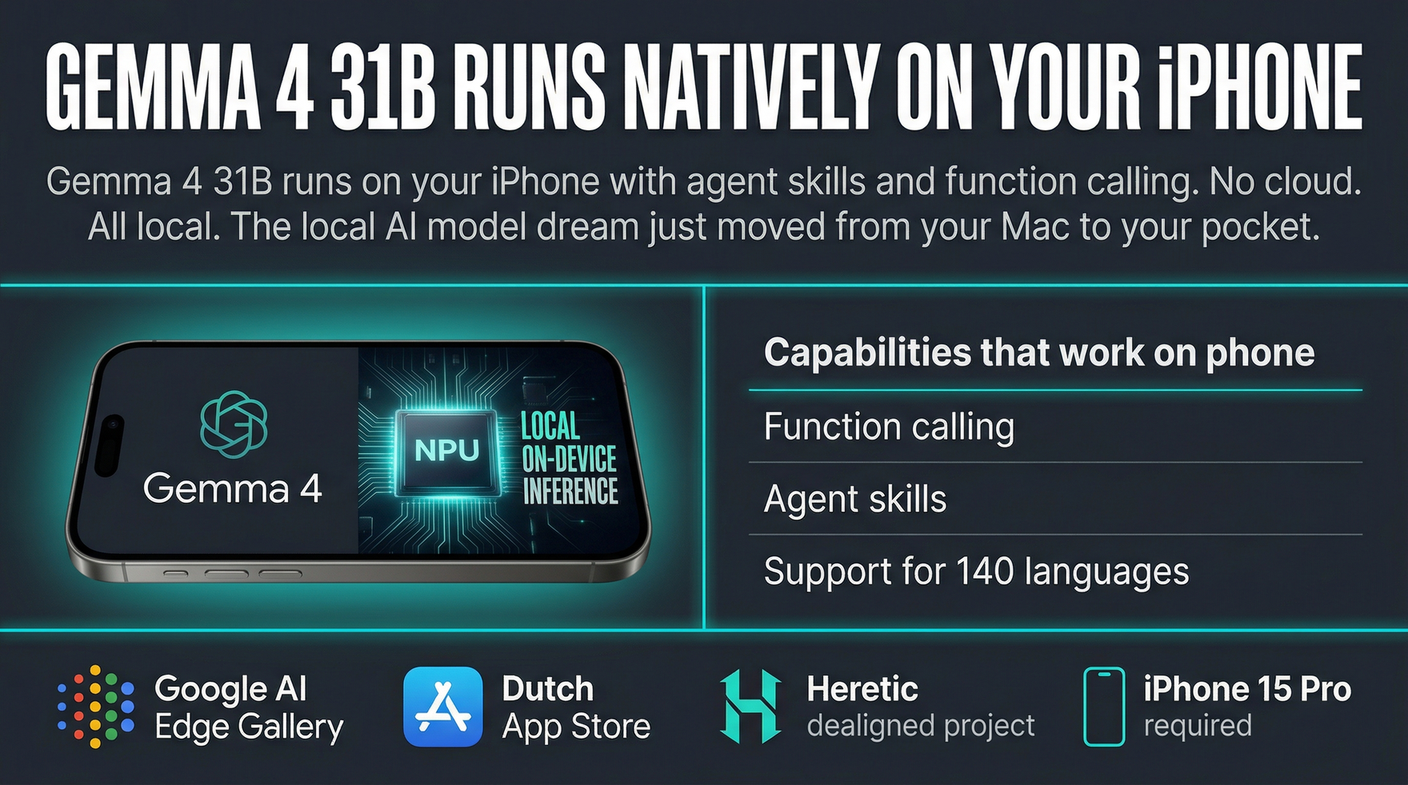

Gemma 4 31B is now on iPhone. Not a simplified version. Not a demo. The actual model with function calling and agent skills running on your Neural Processing Unit, no cloud required.

Google AI Edge Gallery hit the Dutch App Store today with native Gemma 4 support for iPhone 15 Pro and newer. The HN thread is already full of people downloading it and sharing results. The local AI model story has been building for months. This is the version that makes it concrete. Your phone. Your pocket. A 31B parameter model doing agentic work on-device.

What You Can Actually Do With It

Gemma 4 31B supports 140 languages, native function calling, and agentic workflows. That last part matters. It is not just answering questions. It can take actions on your phone. Open apps. Modify settings. Chain operations together based on context.

For someone building local AI workflows, this is the piece that changes the hardware math. You are no longer tied to your Mac or your desktop. The inference is happening on a device you carry everywhere.

One HN commenter confirmed the agent skills work. They tested it on iPhone and found the mobile actions firing correctly, all local. That is the benchmark other AI phone assistants have been promising and not delivering.

Where It Falls Short

The same thread has the honest take on coding performance. Gemma 4 31B is not impressive compared to Qwen 3.5 27B for code generation tasks. If you are looking for a mobile coding assistant, this is not that. The model excels at document analysis, roleplay, and agentic decision-making. The strengths are reasoning and action, not code synthesis.

One person tried connecting it to OpenClaw immediately and ran into compatibility issues. That is a tooling problem, not a model problem. The model runs fine. The harness layer for hooking mobile NPU inference into external agent frameworks is still being figured out.

The Safety Layer Is Optional If You Want It to Be

The Heretic project already has a repo for running dealigned Gemma 4 locally. If you want the model without the built-in safety controls, there is a path to that on your phone. The combination of mobile plus local plus unfiltered is now technically available.

Whether that matters to you depends on your use case. For most people, the on-device model with default alignment is plenty capable. For researchers, developers testing alignment behavior, or anyone who wants to run local conversations without content filters, the tools exist.

The local AI model story has been building toward this moment for over a year. Models that run on consumer hardware. Inference that does not round-trip to a server. Privacy by default because the data never leaves your device. Your phone is now part of that story.

Sources:

– Google AI Edge Gallery (App Store)

– Hacker News Discussion

– Dealigned Gemma 4 (GitHub)