After a week of billion-parameter models, corporate lock-in drama, and AI-discovered zero-days, here is something different. Someone built a language model from scratch. Nine million parameters. One hundred and thirty lines of PyTorch. It trains in five minutes on a free Colab GPU and the model talks like a small fish named Guppy.

The Hacker News thread hit 459 points and climbing. The comments are not arguing. People are sharing how long their first training run took and posting the jokes Guppy makes. That almost never happens.

What GuppyLM Actually Is

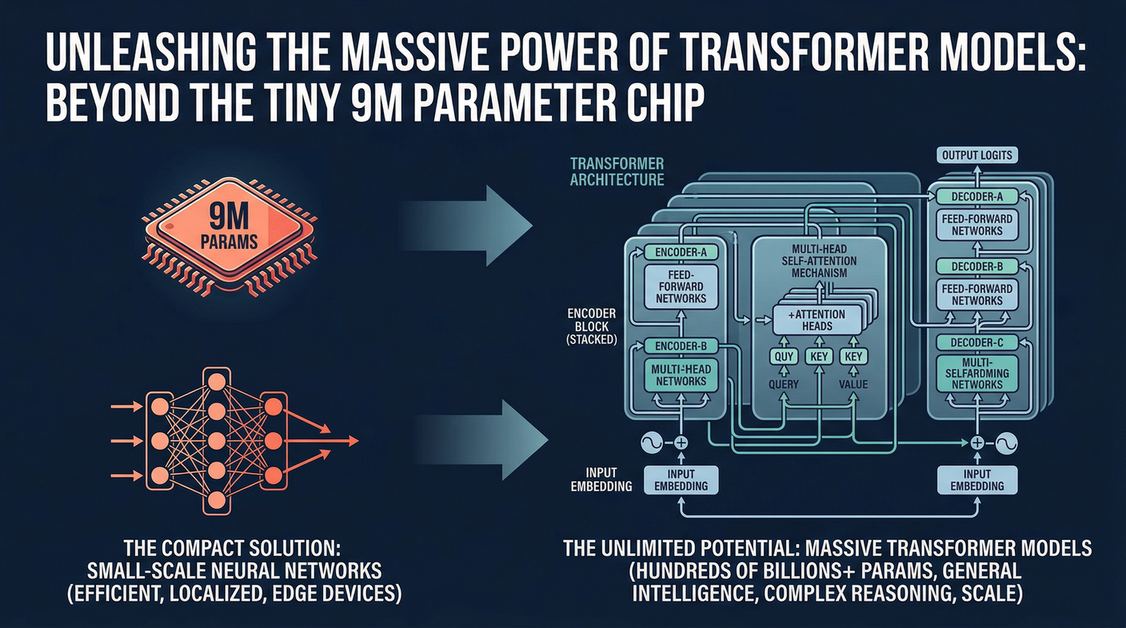

Nine million parameters. Six layers. Three hundred and eighty-four hidden dimension. Six attention heads. Vanilla transformer. No grouped query attention, no rotary positional embeddings, no SwiGLU activations. Just the simplest possible version of the architecture that actually works.

The training data is sixty thousand synthetic conversations. That is it. The Colab notebook runs on a T4 GPU, which is free. When it finishes, you have a checkpoint you can load and chat with immediately.

The model has a personality because the training data has a personality. Guppy thinks the meaning of life is food. Guppy tells fish jokes. Ask Guppy what happened when it hit the wall and it will tell you it is a dam joke, because of course it will.

One quirk worth knowing: the tokenizer has never seen uppercase tokens. Ask Guppy something in all caps and it will respond that it does not know what that means but it is Guppy’s now. The model treats unknown inputs as something to claim rather than something to ignore. That was not engineered. It emerged.

Why This Matters More Than It Sounds

Most people using AI have no idea what is happening inside the box. That is fine for users. It is a problem for anyone trying to evaluate claims, understand limitations, or build on top of these systems without blindly trusting the marketing.

The gap between using AI and understanding AI feels enormous because the existing entry points are bad. Dense papers assume you already know the field. Tutorials skip the parts that are actually confusing. And the tooling hides everything behind abstractions that teach you the tool, not the underlying mechanism.

GuppyLM does not hide anything. You can read the whole thing. The training loop is a for loop. The model is a class. The tokenizer is a few hundred lines. If you have ever wondered what happens during a forward pass or why training converges or what exactly an attention head is doing, you can find out by reading code you can run in your browser.

The Real takeaway

Every large language model you have ever used is built from the same parts. Stacked attention layers, feed-forward networks, token embeddings, positional encodings. GuppyLM proves this by being small enough to hold in your head all at once. It is not a demo. It is not a simplified diagram. It is the same architecture at a scale where you can actually see what is happening.

The model weights are on Hugging Face. The training notebook is live. You do not need to install anything or set up an environment or pay for compute. Open the Colab link, hit run, and in five minutes you have your own tiny language model that thinks the meaning of life is food.

Sometimes the best way to understand something enormous is to build something small.

Sources:

– GuppyLM GitHub (source, weights, breakdown)

– Hacker News Discussion

– GuppyLM Training Notebook (Colab)

– GuppyLM on Hugging Face