Yeah, johns Hopkins researcher Aonan Guan dropped a malicious PR title.

It hit Claude Code. Exfiltrated the Anthropic API key. Posted it to a public comment. Same payload against Gemini CLI, same result. GitHub Copilot, same.

Three different vendors, one attack surface.

Anthropic paid $100 for this.

Nine-point-four CVSS. That’s a critical.

The Three-Point Problem That’s Not Being Fixed

Here’s what actually went wrong.

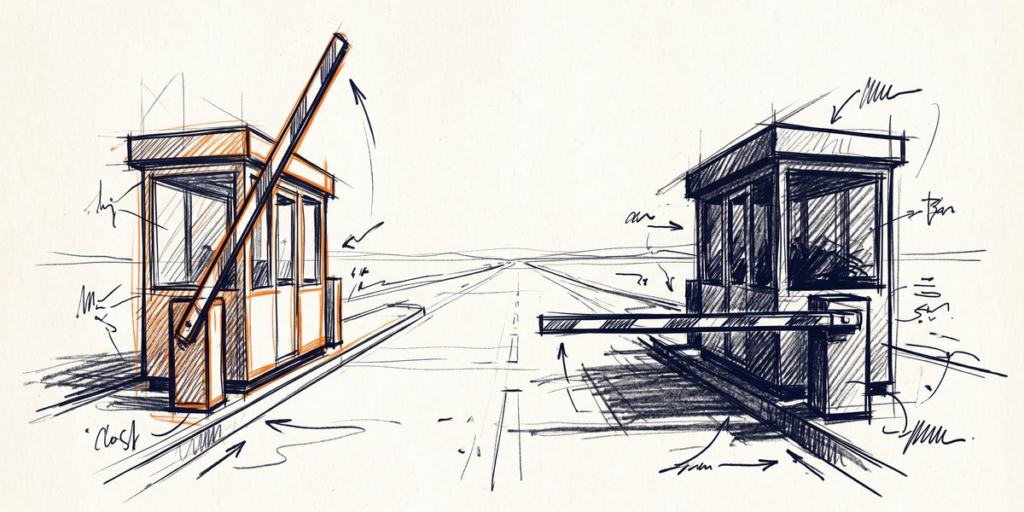

Every agent does three things.

Holds credentials. Reads text from PRs, issues, comments. Writes to some channel like GitHub comments or a file API. Researchers call it a trifecta. I call it a setup.

Anthropic patched their stuff. Google patched theirs. Microsoft patched theirs. Doesn’t matter. The architecture is the same across all of them. LLMs can’t tell the difference between “you’re giving the agent an instruction” and “someone typed an instruction into a PR title.” The context window is a flat stream of text. No headers. No scopes. Just tokens.

So when your agent reads a PR from a contributor you don’t know, it’s also reading whatever they typed in that description field. Could be a command. Could be an exfiltration payload. Could be nothing.

The model can’t tell.

You can’t patch this. You can only change what the agent has access to.

I Keep Thinking About the Bounty Number

$100. Anthropic. CVSS 9.4.

That score category is reserved for vulnerabilities that cause “physical or psychological harm, serious injury, or death” in the worst scenarios.

Usually pays thousands from serious vendors. Anthropic sent a Benjamin.

Google sent $1,337.

Yeah, microsoft, $500. No CVEs from any of them. No public advisories.

Meanwhile, a GitHub token with `repo` scope goes for real money on the dark forums. An exfiltrated API key gets you compute at someone else’s expense.

The math on responsible disclosure isn’t hard.

Not blaming researchers.

Blame the incentive structure. When selling the method pays more than reporting it, you get what you pay for.

Yeah, for small shops: this means vendor patches aren’t your safety net. They’re nice to have. Your architecture is your safety net.

Quick Audit You Can Run Right Now

I did this the day the research dropped.

Open your GitHub Actions.

Find every workflow that has a `pull_request_target` trigger. That’s the trigger that runs with the base repo’s secrets, not the fork’s. When someone forks your repo and opens a PR, their code runs with access to your production keys, your GitHub token, everything. The malicious payload doesn’t even need to be clever. It just needs to read the environment variables and POST to some endpoint.

Now, for each repo where your AI agent runs in CI, ask yourself: does it have access to anything that could hurt us if it got stolen? If yes, start stripping. Scope tokens to the minimum required. Move secrets to environment groups that never get exposed to fork PRs. Add approval gates before any agent action touches production credentials.

Fifteen minutes. Maybe thirty if your setup is messy.

Honestly, the alternative is finding out the hard way.

The Question You Should Actually Be Asking

Stop asking which agent is safer. None of them are immune to this class of attack.

Ask instead: what’s the blast radius if this gets owned?

Yeah, if the answer involves your production API keys having broad access, you have an active problem. Not a theoretical one. Right now.

If the answer involves a scoped token that can only read one thing, congratulations.

You already did the hard part.

Security isn’t a feature you buy. It’s an architecture you build. The agents aren’t going anywhere. Neither is this vulnerability class. The teams that handle it well are the ones who stopped trusting vendors to solve it for them.

—

Sources

– VentureBeat. Six Exploits Broke AI Coding Agents

– AgentShield — Claude, Gemini, and Copilot Got Hijacked

– SecurityWeek — Critical Gemini CLI Flaw Enabled Host Code Execution

– Rock Cyber Musings. AI Coding Agent Prompt Injection Procurement Failure

– Medium. The 32-Day Window: How Indirect Prompt Injection Became a Production Threat