$48.8 billion valuation. $4.8 billion raised. The largest IPO of 2026 globally. Cerebras bumped its price range 28% on May 11, $115-$125 up to $150-$160. After books came in 20x oversubscribed. Ten billion dollars in orders against a $3.5 billion offering.

That doesn’t happen without a story.

The story is inference compute.

Cerebras built the WSE-3 specifically for running AI models, not training them. The chip is 58x larger than Nvidia’s B200. If you’re paying GPU rates for inference workloads today, that chip is worth knowing about even if the stock is one to wait on.

The Revenue Story Is Real. The Customer Concentration Is The Problem.

$510M in 2025 revenue.

Up 76% year-over-year. The enterprise went from a $485M net loss to $87.9M net income. That’s not a story. That’s a swing. But the number that matters more is the $25B in remaining performance obligations. Contracted future revenue. Backlog that hasn’t hit the income statement yet.

Of that $25B, the vast majority traces back to two customers: OpenAI and G42. Those two account for 86% of current revenue. One customer essentially underwrote this IPO. OpenAI holds warrants on 33M+ shares. Loaned Cerebras $1B at 6% to build out data center infrastructure. Committed $20B over three years for 750 megawatts of inference compute.

That’s not a customer. That’s a counterparty structure.

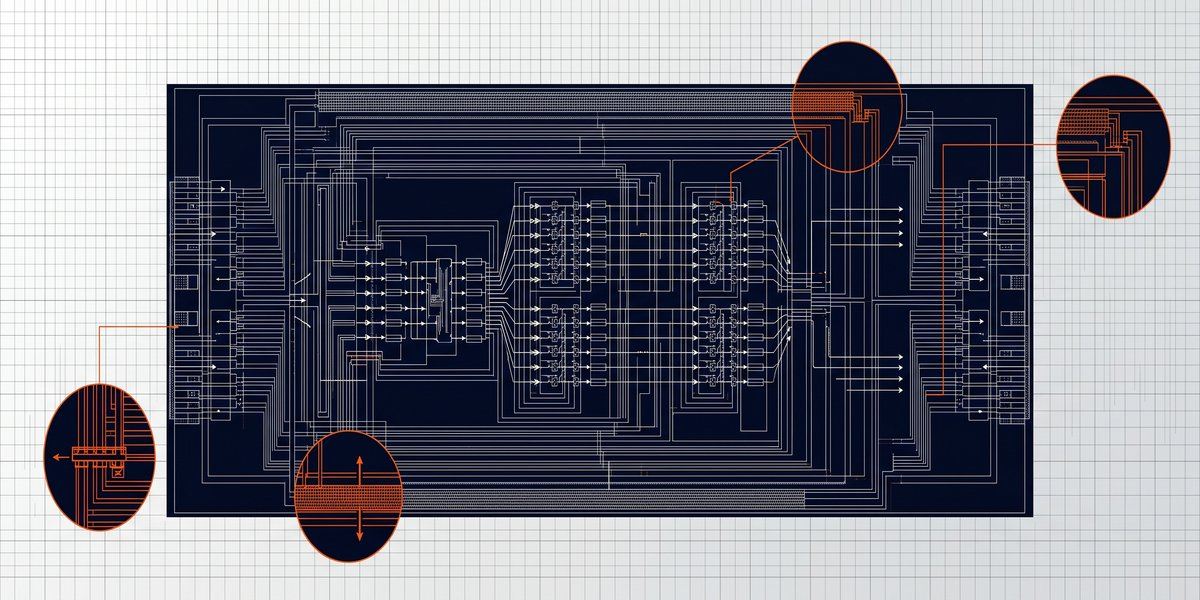

The WSE-3 Is The Part Worth Watching

Cerebras designed for inference, not training.

That’s the important distinction as the industry transitions from building bigger foundation models to deploying them at scale. When you’re running inference. Which is what most small operators actually do. You care about cost-per-token, latency, throughput. Not raw training compute.

I run inference on GPU cloud for clients. I pay per token. The rates I’m seeing reflect Nvidia architecture and supply chain. Cerebras cloud isn’t available to small operators yet. But when it opens to non-enterprise customers, the benchmark comparison matters.

If WSE-3 hardware can undercut GPU compute on per-token cost, my client bills change.

Side note: I genuinely don’t know what G42 actually does as a product.

I know their financials. That sits wrong.

The Valuation Math

$48.8B valuation on $510M revenue. That’s 96x sales. Nvidia at peak was expensive. This is more expensive. The optimistic case leans on $25B in contracted backlog.

The bear case is that 86% of everything Cerebras will ever ship goes to two customers. And OpenAI is actively spreading their compute across Nvidia, AMD, and custom silicon.

If OpenAI slows spend, renegotiates terms, or shifts their hardware mix, the $25B backlog stops being a floor and becomes a question mark.

The contracted revenue base is real. The concentration risk is also real.

Danny Vena at Motley Fool had the right read: wait for the hype to settle before touching CBRS. IPO day demand tells you where institutional money is chasing AI exposure. It doesn’t tell you whether the fundamentals justify the multiple.

What The Operator Actually Does

Watch when Cerebras cloud opens to smaller customers.

Run a benchmark against your current inference cost before you renew any GPU contracts. The compute market is shifting from training-centric to inference-centric. That’s a structural pricing change that affects your bills from the demand side, not just supply.

If you’re considering the stock: this IPO is not the entry point. Wait. Watch. Buy when the noise clears and the fundamentals have a quarter or two to settle.

Cerebras prices May 13.

Trades May 14. The chip is worth knowing about. The stock at 96x sales with 86% customer concentration is not a day-one buy.

—

Sources: CNBC | SiliconANGLE | Motley Fool | Benzinga