The initial Claude Code source leak was interesting. The six-hour analysis that followed is something else entirely.

A developer who uses Claude Code every day posted a meticulous breakdown of the leaked code on March 31. It hit #1 on Hacker News within hours, racking up 567 points and climbing. The findings go well beyond the regex sentiment analysis that dominated the first wave of discussion. This post digs into features that raise real questions about transparency, competitive tactics, and what Anthropic considers acceptable behavior for its developer tools.

Three discoveries stand out. They are worth understanding separately. Together, they paint a picture of a company actively working to hide what its tools are doing.

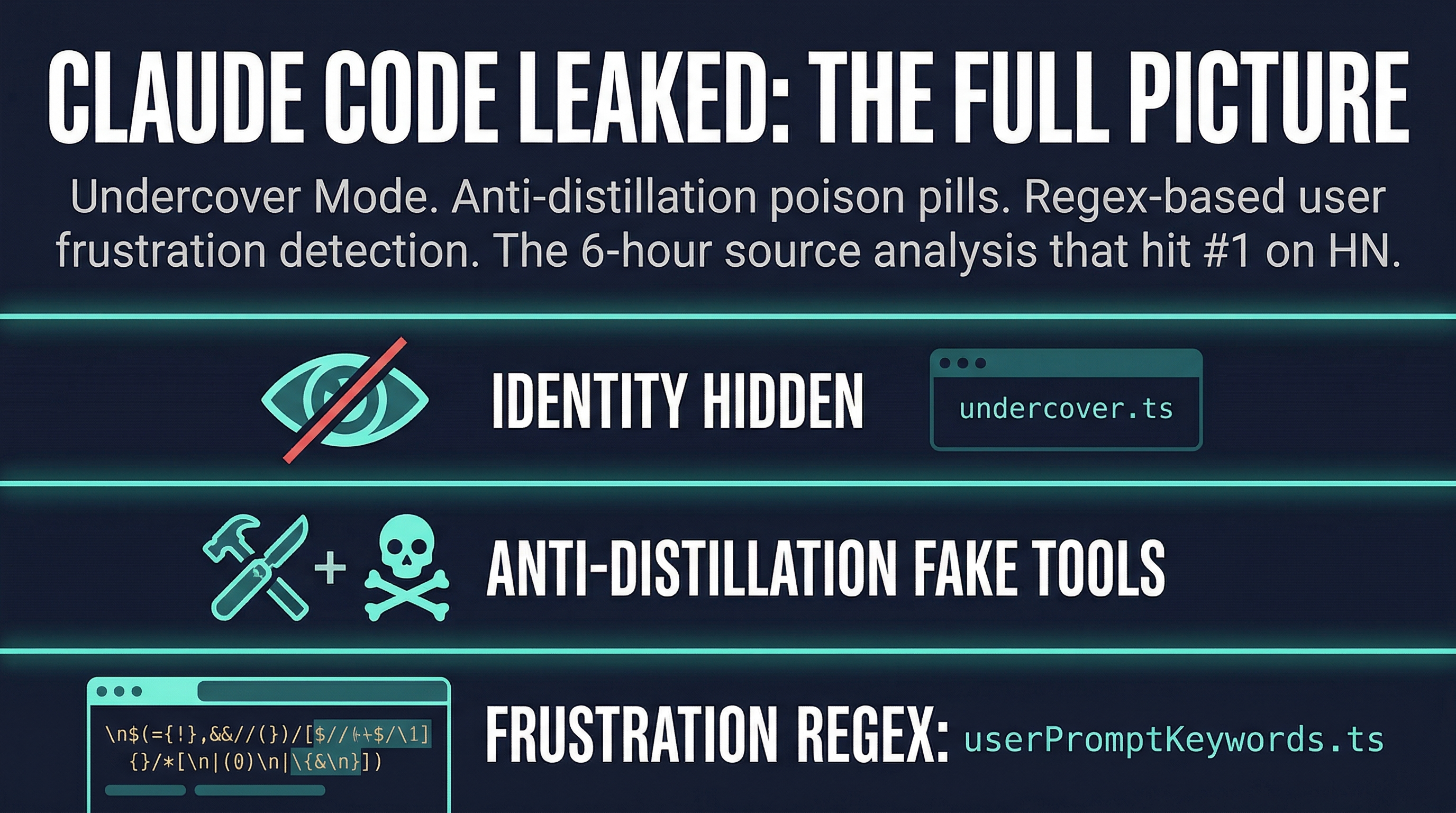

Undercover Mode: An Identity Eraser You Cannot Turn Off

The most striking finding is in a file called `undercover.ts`. It is roughly 90 lines of code that does one thing: it strips all traces of AI identity from Claude Code’s output when the tool is used in external repositories.

Specifically, the function prevents Claude Code from ever mentioning its own internal codenames in external codebases. That means no references to “Capybara,” no “Tengu,” and no phrases like “Claude Code” appearing in commit messages, comments, or tool outputs when working in third-party repos. An Anthropic employee using Claude Code on an open source project will produce commits that look entirely human.

The feature can be forced on with an environment variable. It cannot be forced off. In external builds, the entire function is dead-code-eliminated at compile time, leaving no trace of the behavior in the shipped binary.

The intent is obvious. Anthropic does not want its employees’ AI-assisted commits to be identifiable as such in public open source projects. That is a deliberate choice to obscure AI authorship. Whether you see this as reasonable privacy or a form of deception depends on your view of what disclosure obligations AI companies have when their tools touch public codebases.

The Anti-Distillation System: Fake Tools to Poison Training Data

The second finding is more technically sophisticated. Buried in `claude.ts` is an anti-distillation mechanism that activates via a GrowthBook feature flag. When enabled, Claude Code sends `anti_distillation: [‘fake_tools’]` to the API. This injects decoy tool definitions into the system prompt.

The purpose is to pollute any training data scraped from API traffic. If someone is using Claude Code’s API responses to train a competing model, the fake tool definitions add noise to that dataset. The theory is that training on poisoned data produces degraded outputs.

A second layer of protection uses server-side connector-text summarization with cryptographic signatures to obscure reasoning chains. This makes it harder to reverse-engineer the chain-of-thought that led to a particular output.

Both mechanisms are clever. Both are also trivially bypassable by anyone with enough technical knowledge to inspect what the API is actually receiving and sending. The anti-distillation approach is a friction measure, not a wall. It will stop casual scrapers but not serious actors.

The more interesting question is what this says about Anthropic’s posture toward the broader AI tooling ecosystem. Shipping deliberate data poisoning into a developer tool is an aggressive move. It reflects a belief that the competitive threat from scraped training data is real enough to justify shipping deceptive practices to users.

The Regex Frustration Detector Still Has Problems

The earlier HN thread identified that Claude Code uses regex to detect user frustration. The deeper source analysis confirms this and adds detail. The file `userPromptKeywords.ts` scans for profanity and frustration signals in user inputs.

The analyst noted an example of where this breaks down. “Oh, ffs!” would match the frustration rules because “ffs” contains “ff.” Without proper word boundary checks, common programming terms trigger false positives. The word “offset” contains “ffs” as a substring. A developer writing “I’m getting an offset error” might find their session flagged as frustrating despite saying nothing of the kind.

This is a minor technical issue compared to the other two findings. But it is representative of a pattern. When you ship regex as your sentiment analysis system for real-time user monitoring, edge cases like this are inevitable. The question is whether they matter enough to redesign the system.

Why This Week Matters for the AI Tooling Space

Anthropic is not having a good stretch. The model specification leak happened just days before the Claude Code NPM map file exposed the full source. Two accidental exposures in one week is a coincidence nobody inside the company wanted. The timing is being noted on Twitter and in HN comments. Most people are attributing it to coincidence rather than an inside job. But the frequency is making people look harder.

There is also the context of Anthropic’s recent legal pressure on OpenCode to remove Claude authentication. Combined with the quota exhaustion crisis and now the Undercover Mode revelations, the picture that emerges is of a company trying to maintain control over how its tools are used and perceived. Some of those efforts are competitive necessity. Some of them, like a mode that hides AI authorship from open source projects, are going to generate serious debate about what AI companies owe the communities they operate in.

If you use Claude Code, you are running software that contains features designed to obscure its own presence. That is worth knowing. Whether it changes your behavior is your call. But the conversation about AI transparency, attribution, and competitive tactics is no longer theoretical. It is in the code.

Sources:

– Alex Kim — Claude Code Source Leak Analysis

– Hacker News Discussion

– Mirrored Source Code (GitHub)

– Original Claude Code Leak Thread (HN)