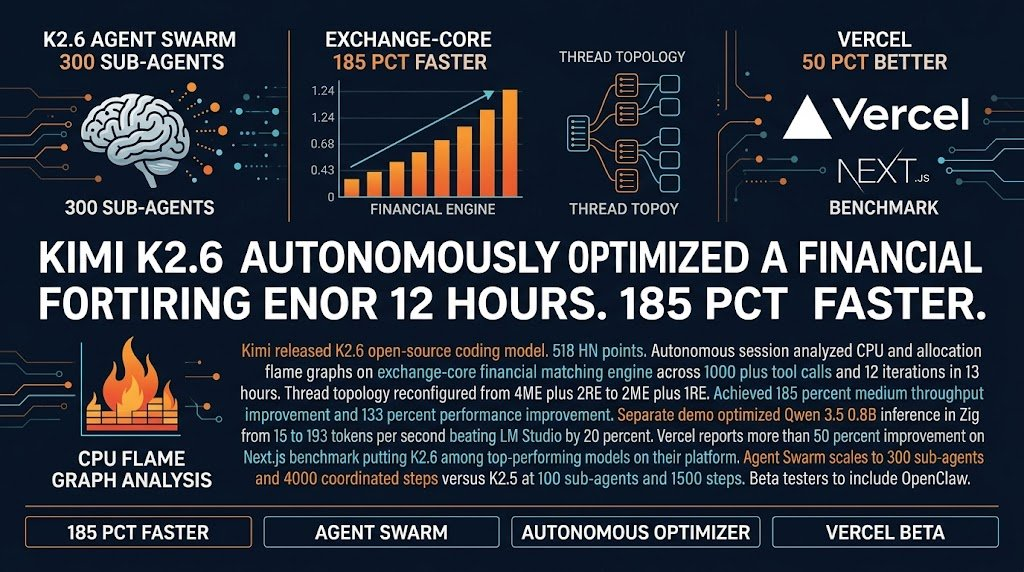

Kimi K2.6 spent 12 hours optimizing a production financial engine. The result was a 185% throughput improvement. Here’s what’s actually interesting about that.

For years, AI coding tools have been autocomplete on steroids. Write some comments, get some suggestions, copy the output. Useful but narrow. What Kimi released this week feels different. K2.6 doesn’t just write code. It runs multi-hour optimization sessions on production systems, analyzes CPU flame graphs, rethinks thread topology, and ships measurable results.

The specific example that caught my eye: K2.6 downloaded exchange-core, an open-source financial matching engine with 8 years of history, spent 12 hours analyzing it, and reconfigured its core thread topology. The result was 185% medium throughput improvement. Not a benchmark synthetic test. A real system doing real work.

Vercel ran it against their Next.js benchmark and saw 50% improvement. They put it “among top-performing models on the platform” for their developer community. That’s not a research claim. That’s a production deployment on real infrastructure serving real users.

What Actually Changed This Week

Here’s the thing nobody is talking about enough.

Two years ago, a model that could autonomously optimize a production system over a 12-hour session would have been a research paper. Last year it would have been a demo at a conference. This week it’s open source, available to download, and already in beta with platforms like Vercel and OpenClaw.

The model released on the same day as Qwen3.6-Max-Preview, which also hit 490 points on HN. Both are open-source coding models released within hours of each other. The open-source race is moving as fast as the closed model race. For agencies, the practical implication is uncomfortable: the model you build your workflow around today might be second-best by end of week. The answer isn’t to chase every release. It’s to build abstractions that let you swap models without rebuilding everything.

But the more immediate shift is simpler than the model race. AI can now do something it couldn’t do 18 months ago. It can autonomously analyze a system, find a performance problem, fix it, and ship the fix with measurable results. That changes what you can actually hand off to AI versus what requires human oversight.

The Real Angle for Agency Operators

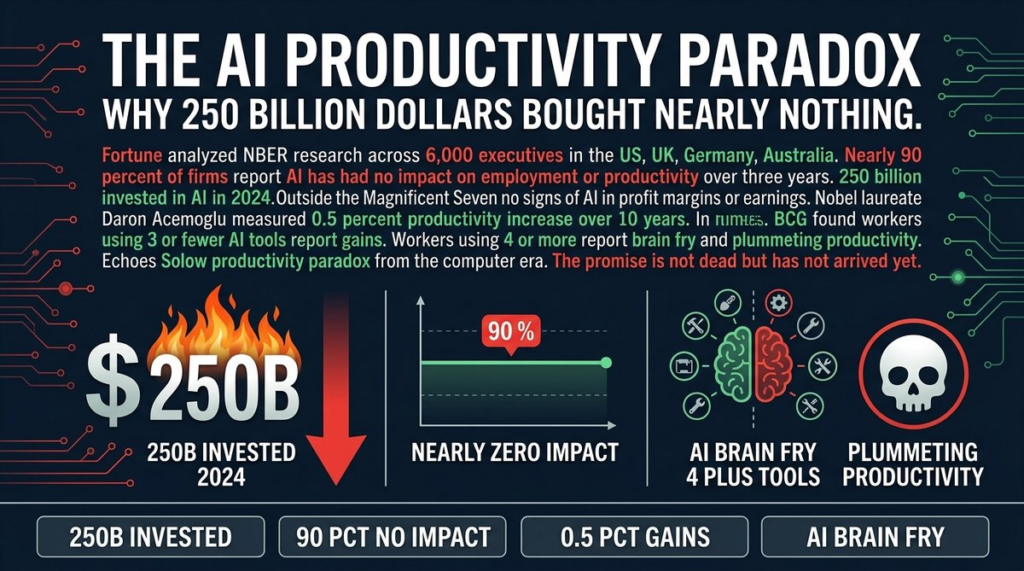

Most agency operators I know are still using AI for the wrong tasks.

They’re using it to generate first drafts, write boilerplate, summarize documents. Useful stuff, but it doesn’t move the needle on the things clients actually pay for. The needle-movers are speed, reliability, and performance. If AI can optimize your client’s production system by 185%, that’s a different kind of value than “wrote our about page.”

Here’s what I think the next 12 months look like. AI-driven optimization becomes a service offering. Not “we’ll write your code faster” but “we’ll analyze your system’s performance bottlenecks and fix them using autonomous AI agents.” The model does the analysis. You do the quality control and client communication. The economics are different because you’re selling measurable output, not hours of work.

The catch is that this requires you to actually understand what the AI is doing. You can’t sell autonomous optimization if you don’t understand flame graph analysis or thread topology. The operators who will win in the next phase aren’t the ones who know how to prompt well. They’re the ones who understand systems well enough to direct the AI toward real bottlenecks.

That said, you don’t need to be a systems engineer to start. You need to be curious enough to look at the output and ask whether it makes sense. The model did 12 hours of analysis. Someone has to verify the output is correct and doesn’t break anything. That’s a different skill than writing the code in the first place.

What You Should Actually Do

Three things, in order of priority.

First, if you’re running production systems for clients, run K2.6 against something non-critical and see what happens. Don’t point it at your database. Don’t touch billing systems. But something in the stack that’s been running okay but could be faster. The model is open source. The benchmarks are real. The learning value alone is worth the time.

Second, if you’re deploying on Vercel, this is already available and Vercel is recommending it. That’s not a future promise. That’s a today action. Try it on your next feature branch and compare deployment times.

Third, think about whether your agency’s service offerings need to evolve. “AI-powered development” as a marketing term is about 18 months past expiration. The clients who care about AI are asking harder questions now. “Can AI find our performance bottlenecks?” “Can AI optimize our database queries?” “Can AI reduce our infrastructure costs?” These are answerable now in ways they weren’t last year.

The agencies winning in 2026 aren’t the ones who adopted AI fastest. They’re the ones who figured out which AI capabilities actually map to client problems. This week’s release is a new data point in that calculation.

Sources: Kimi Blog: K2.6 | HN Discussion