Yeah, remember when you had to beg Microsoft for access to GPT-4?

Yeah. That was 2024.

The deal was simple. Azure or nothing. Sign the enterprise agreement. Eat the compliance costs. Accept whatever they charged because there was no alternative. That lock-in felt permanent. It wasn’t.

This week, OpenAI showed up on AWS Bedrock.

GPT-5.5. Codex. The whole catalog. If you’re already paying AWS for infrastructure, you can now light up OpenAI models against that spend. No new vendor. No new security review. No three-hour sales call where someone tries to upsell you on a committed spend minimum.

This is the biggest change in how small teams get frontier AI since ChatGPT went viral.

Nobody’s framing it right though.

The Take Everyone’s Getting Wrong

The headlines say OpenAI won. They took their ball and went multi-cloud. Microsoft lost leverage. Blah blah.

Here’s the thing nobody’s mentioning. Microsoft didn’t lose this deal.

They restructured it.

Microsoft gave up exclusivity, sure. But they kept 27% of OpenAI equity. They stopped paying OpenAI revenue share. OpenAI still cuts Microsoft 20% of revenue through 2030. The old arrangement had no cap. The new one does.

Yeah, microsoft traded exposure to unlimited downside for a floor they couldn’t negotiate out of.

They got paid to walk away from a bet that was already turning sour.

Azure OpenAI endpoints have been unreliable for months. Enterprise buyers were working around them anyway. Microsoft grabbed certainty and called it strategy.

That’s not losing. That’s winning ugly.

The Actual Story Is the Procurement Wall

Yeah, here’s what changes for your team right now.

If you run workloads on AWS, OpenAI just became a checkbox, not a procurement project.

That matters more than the partnership drama. Compliance reviews take weeks. Legal sign-offs take months. Enterprise teams have dedicated people doing vendor management. You don’t.

The operator who couldn’t justify a separate Azure contract just got their excuse vaporized.

The shop that was stuck waiting for IT to approve a new AI vendor can now flip a switch in Bedrock and ship.

Honestly, bottleneck wasn’t model quality. Never was. It was the friction of going multi-vendor. That’s gone now.

The Price Race Just Got Real

Three frontier model families competing for the same billing dollar.

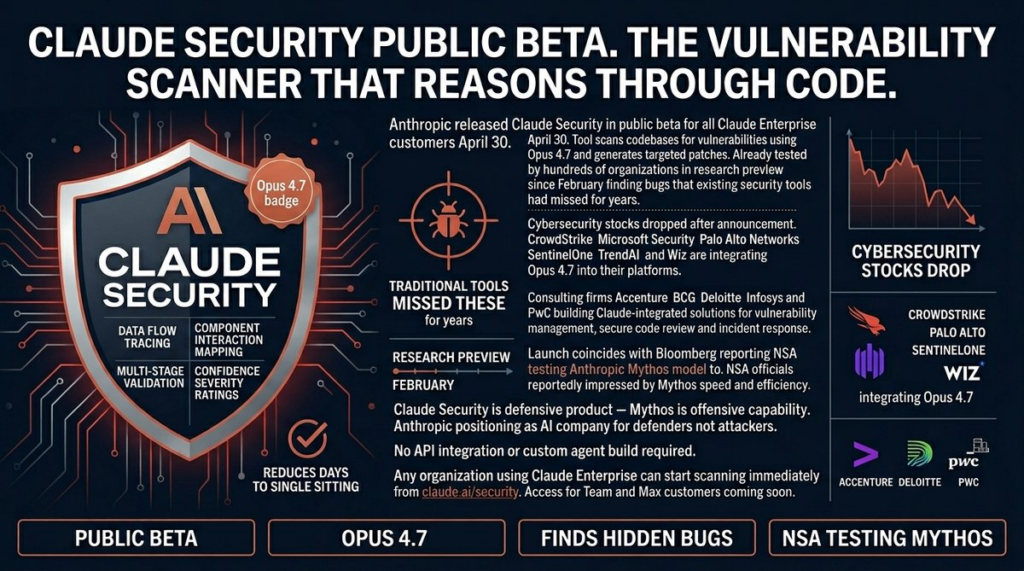

DeepSeek V4 Flash at roughly $0.14 per million tokens. GPT-5.5 mid-tier. Claude Opus 4.7 for the hard stuff. All in the same portal you already pay for.

You don’t have to pick one. Route easy tasks to the cheap stuff. Save the expensive model for what actually needs it. A fifteen-person agency handling five hundred chatbot interactions a month could push most of that through DeepSeek and halve their AI bill without touching the product.

Multi-model routing isn’t clever anymore.

It’s just ops.

Side note: AWS Bedrock’s documentation is still a mess. Multi-region support is half-baked. Don’t trust the UI alone—check the API docs directly or you’ll miss which regions actually support which models.

Speed Is Your Only Advantage

Enterprise IT is still running evaluations. Fortune 500 procurement is still writing RFIs. Committee meetings are scheduled.

By the time they finish their process, you could already have three models in production.

Do this today. Pull your current AI spend. Every dollar going to a vendor you don’t already have a relationship with is a dollar you might fold into AWS commitments you’re already making. Check Bedrock. Test the models against your actual workload, not whatever TechCrunch is benchmark-whoring over this week.

Don’t sign annual contracts with anyone right now.

Prices are moving. The market shifted this week. Your future negotiating leverage depends entirely on not locking yourself in before competition gets serious.

The overhead just dropped.

You already know what to do.